Not All "Eyes" Are Made Equal

During the manufacturing stage, there are always minor hardware differences between units. In the case of a pair of cameras used for stereoscopic video recording, such differences can create a mismatch between the left and right images, which can become very uncomfortable for the viewer.

Several types of left/right image discrepancies need to be compensated, including vertical shift, horizontal shift, keystone, rotation, zoom, distortion… Some discrepancies aren’t as bad as others. For example, horizontal shift is not a big issue because users will naturally adjust the left and right images laterally with eye vergence. A vertical difference cannot be compensated by the user’s eyes, however. It is important during the calibration process to correct the most problematic discrepancies first.

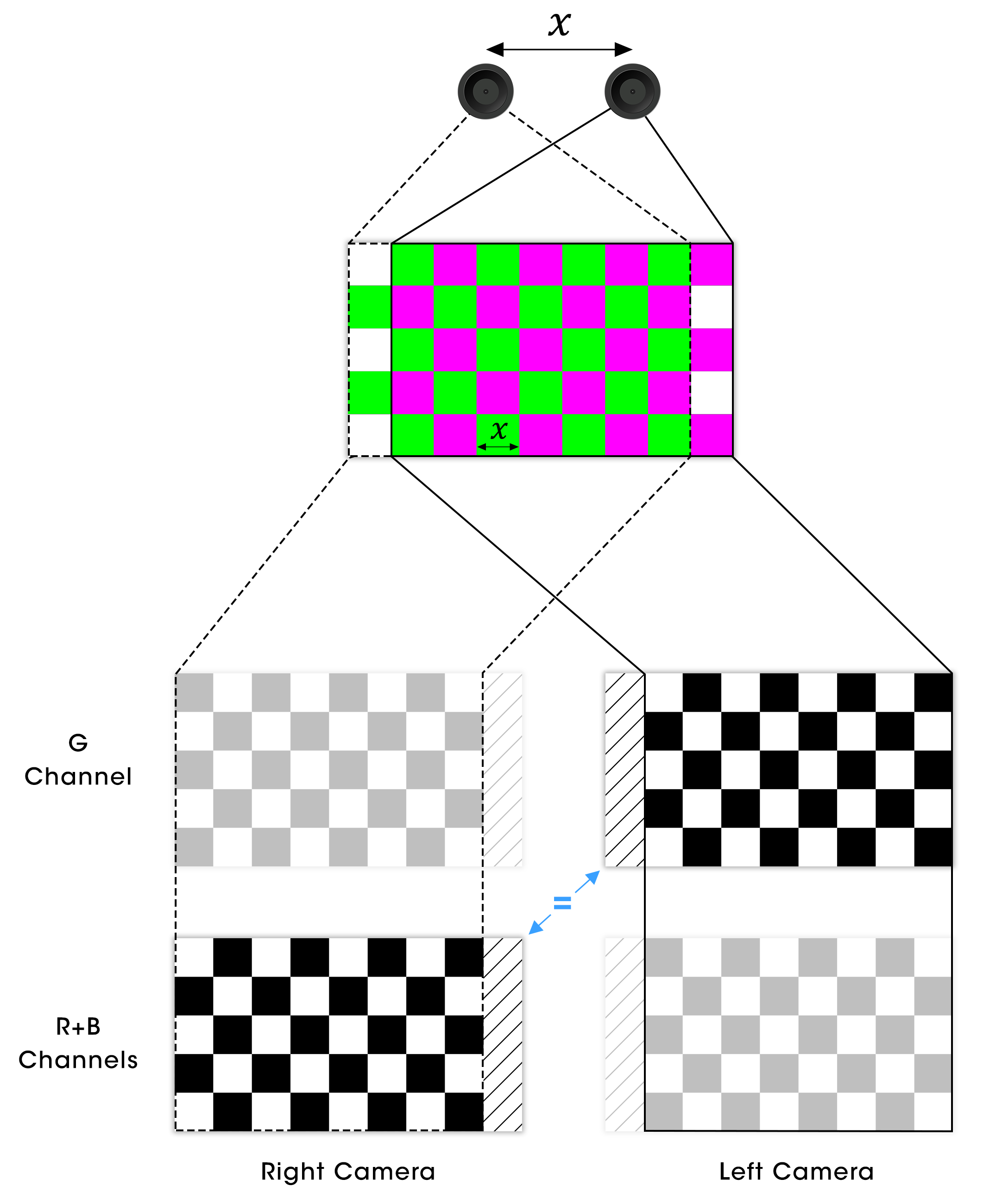

To correct left/right image discrepancies, one needs to ensure that both cameras “see” the same reference image. In theory, the easiest way to do this is to point them at an image at an “infinite” distance (in actual fact, one that is far enough away). Needless to say, this is not practical in a factory environment. One trick to solve this problem is to use the red, green and blue channels of each camera separately. Say we present the cameras with a green and purple1 checkerboard pattern where the green channel is shifted horizontally from the red and blue channels by the distance that separates both cameras. If we keep only the green channel from the left camera and the red and blue channels from the right camera, both will see the same pattern without any keystone effect.

Without using an image at an “infinite” distance, we obtain matching images from the green channel of the left camera and the red and blue channels of the right camera, easing the whole calibration process.

Separating the green and red + blue channels makes sense because of the Bayer structure of camera sensors (50% green, 25% red and 25% blue).

The information in our tech blogs is provided as a way to share knowledge for educational and research purposes. However, aspects of the described technology may be subject to Nintendo patents and/or pending patent applications

-

A color combination loved by programmers and hated by artists. ↩